Weather forecasting is an interesting beast.

Deepmind have now published a paper that uses classical radar data and deep learning to improve short-term weather forecasts. This work was produced in collaboration with the Met Office, who evaluated the predictions to be the best 89% of the time compared to existing methods.

Rain, snow, and wind are the most common weather phenomena we encounter in day-to-day life. Imagine the economic impact of knowing the weather 90 minutes out. From solar and wind energy to large-scale events. You know, a football game just so happens to be 90 minutes.

Meteorological data and numerical models play a crucial role in weather forecasting.

Numerical weather prediction models have become quite good at predicting the weather days ahead. However, high-resolution forecasts of the immediate future, i.e. up to a few hours ahead are still mostly unsolved. This discipline is called nowcasting.

I actually worked on a nowcasting project in the HYMS project, where we were testing a novel microwave sensor. Let's explore the paper!

What is Numerical Weather Prediction?

Numerical weather prediction (NWP) is based on numerical models that solve the equations of fluid dynamics, along with the calculations of interactions with the surrounding environment.

The models use data assimilation to update the model with observed data to keep track of the current state, and to predict how the future state will evolve. The types of data used for NWP depend on the type of model used. Weather forecasting models can be divided into two categories, dynamical models and statistical models. Dynamical models use mathematical equations that attempt to simulate the flow of the atmosphere. Statistical models use statistical relationships to

What is Nowcasting?

Nowcasting is a process of forecasting weather conditions with a high degree of accuracy over a short period of time.

Radar images used for nowcasting prediction.

The goal of nowcasting is to provide a short term forecast with a time frame of a few hours. Weather forecasting is a complex process. It requires the use of complex numerical models which explains the difficulty in producing a short term forecast. At the same time, numerical weather models do not provide information about the current weather conditions over a very short period of time.

In order to provide a quick forecast, forecasters use a number of methods to help them in their decision making process. The main methods used in nowcasting are radar, satellite, lightning detection, surface observations and numerical weather models.

The data we deal with for nowcasting is time-steps of maps with radar measurements. Usually, every five minutes we obtain a full map of the precipitation in a certain area. The goal then is to use a few of these time-steps to predict a few hours out. Deepmind specifically uses 20 minutes of recorded data (4 steps) to predict 90 minutes (18 steps).

How novel is the Deepmind approach to nowcasting?

People have thrown machine learning at now-casting as soon as it was available. Let's look through a few approaches:

- CNN: You know I love a good U-Net. Due to the fixed step size, we can easily predict a stack of outputs [1]. In our work, this approach was doing pretty good, after changing the loss function to a more appropriate one than Mean Squared Error.

- LSTM: There's a time component, so of course someone will try an LSTM [1]. You can also pair it with a convolutional layer to get a Conv-LSTM [2]. These work pretty alright, but don't scale particularly well, as LSTMs do.

- Generative Models: Since we're trying to generate data, a generative model seems appropriate. These learn the distribution that generates the type of data we're looking at. Generative Adversarial models worked particularly well, since they "learn" a loss function and aren't strictly bound to the assumptions of the mean squared error.

Past 20 mins of observed radar are used to provide probabilistic predictions for the next 90 mins using a Deep Generative Model of Rain (DGMR). Courtesy of Deepmind.

The Deepmind paper sits right in that generative model space. Prior work has been using conditional Generative Adversarial Networks, however, Deepmind came up with a clever approach.

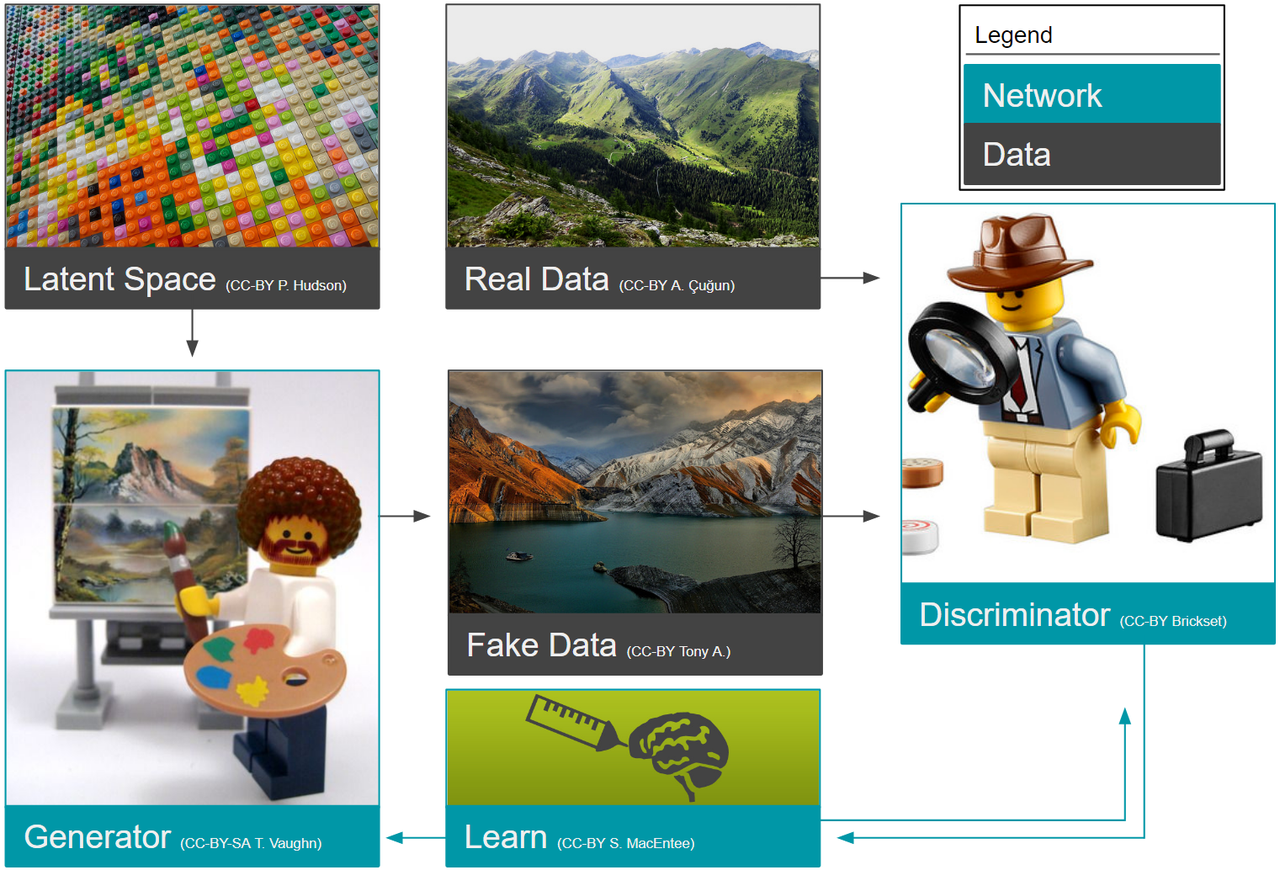

What are Generative Adversarial Models?

Generative Adversarial Models are a relatively new machine learning model that generates images and general data.

They have been used for a variety of interesting tasks, including scene generation and image captioning. The main idea behind the model is to create two neural networks: a generative network and a discriminative network. They both operate on the same training data and try to predict the output.

Schematic of a classic Generative Adversarial Network (GAN).

The difference is that the generative network is trying to produce an output exactly while the discriminative network is trying to predict the difference between the real output and the output of the generative network. They both therefore learn from the training data how to generate plausible outputs.

People have ironed out a lot of the problems that these models had, making them generally available for widespread use.

Clever Approaches Deepmind Uses

Lending an idea from video generation [3], they use two discriminative networks, i.e. two loss functions! One in the spatial domain, to ensure individual precipitation maps do well and one in the temporal domain (essentially a 3D CNN) that ensure temporal consistency! Isn't that neat?

We love some good regularization. It makes out neural networks work after all. In this case, the final term to the Deepmind solution, penalizes deviations of the model on a grid-cell level. It calculates the mean of multiple model predictions and compares it to the real data.

I can imagine that the regularization term would be very tricky to work with. In many cases, it would lead to extreme overfitting.

Finally, in addition to this neat model architecture, Deepmind introduced a neat trick that will be particularly useful for the GAN aficionados. GANs generate the data from a latent distribution, which is usually a fancy term for a vector of random data that is the input to the generative neural network.

How do you ensure that the probabilities we draw from are spatially dependent, as rain would be? We integrate over the latent vectors!

Conclusion

Nowcasting is a part of NWP that particularly lends itself to machine learning applications.

The current focus of research is to improve weather forecasts through the use of artificial intelligence. Deepmind are investigating whether deep neural networks can be used to improve the quality of short-range forecasts. Specifically, this application is in nowcasting, where they are using deep generative neural networks to improve the forecasts up to 90 minutes out.

The paper presents some very interesting work that according to meteorologists in the Met Office outperforms existing methods 89% of the time.

There are a lot of small tweaks and real gems in the paper. I highly recommend a read.

Frequently Asked Questions

- Deep learning has been able to achieve a high level of accuracy in a number of different fields. How do you apply deep learning in numerical weather prediction and specifically nowcasting?

I can highly recommend the 10-year machine learning roadmap, my colleagues, at the ECMWF created. There are interesting applications that can improve specific parts in the weather prediction systems. Some I see as particularly promising are observation operators and bias correction.

- Can deep learning optimize the accuracy of numerical models?

Very likely. There are applications in numerical weather predictions that are very hard to model deterministically or with physical equations. Approaches using deep learning or Gaussian processes could be extremely valuable here in improving the accuracy.

- What is numerical weather prediction and nowcasting?

Numerical weather prediction is the discipline of all forecasting of weather from minutes to months out. Nowcasting specifically is the weather forecasting up to a few hours from "now".